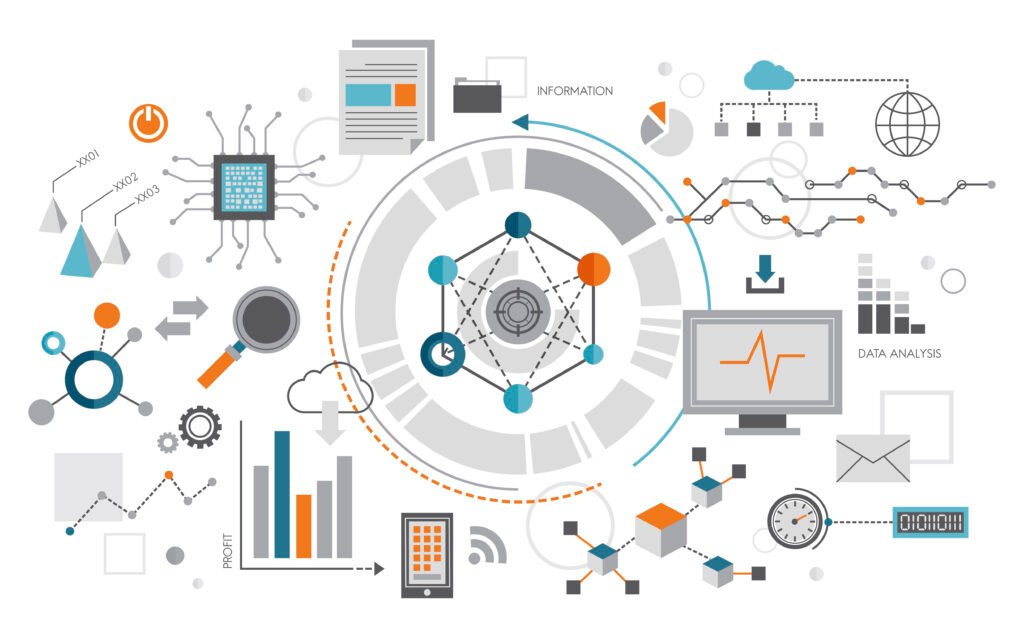

Drive Better Decisions With Actionable Data Insights

LogIQ labs builds the data infrastructure, pipelines, and platforms that turn messy, siloed data into clean, reliable insights, so your teams can make decisions they can actually trust.

Your Data Problem Isn’t Volume, It’s Plumbing

Organizations often possess ample data, yet lack a dependable mechanism to efficiently transport, cleanse, and deliver it where and when it is needed. That is precisely the gap we address—providing end-to-end solutions from data ingestion through to visualization.

Build scalable pipelines that move data smoothly across systems and platforms. Ensure your data is always available where it’s needed.

Transform raw, messy data into organised and usable datasets. Improve data quality so teams can trust the insights they generate.

Connect data from multiple sources into a single, accessible environment. Give teams the visibility they need to analyse, report, and make decisions faster.

Technology Built Around Your Needs

Practical AI and software solutions designed to solve real business problems.

Data Warehouse & Lakehouse

Modern architectures on Snowflake, Databricks, BigQuery, and Redshift

ETL/ELT Pipelines

Automated, monitored pipelines that move and transform data reliably at scale.

BI & Analytics Dashboards

Self-serve analytics in Tableau, Power BI, Looker,built for actual users.

Real-Time Streaming

Apache Kafka, Flink, and Spark Streaming for live data that can't wait

Is your data holding your team back?

1-hour data architecture review, find out where the bottlenecks are.

DataOps: Speed, Quality, and Confidence at Scale

We build with DataOps principles, automated testing, schema validation, data lineage tracking, and CI/CD for pipelines. So when something changes, you know about it before your users do.

Automated Data Pipeline Testing

We implement automated testing to ensure data pipelines run reliably. Errors are detected early before they affect downstream systems or users.

Schema Validation & Data Quality

Validate data structures and formats as data flows through pipelines. This helps maintain consistency, accuracy, and trust in your data.

Data Lineage & Traceability

Track where data comes from and how it moves across systems. This provides full visibility and simplifies debugging and compliance.

CI/CD for Data Pipelines

Apply continuous integration and deployment to data workflows. Updates and changes are deployed safely with minimal disruption.

Cloud Data Platforms We Specialise In

Track progress through agile development, regular updates, and dedicated project management.

Snowflake – cloud-native warehousing with instant elasticity

Databricks – unified analytics and ML at scale

Google BigQuery – serverless analytics on petabyte datasets

AWS Redshift & Glue – enterprise ETL and warehousing on AWS

Azure Synapse – integrated analytics for Microsoft ecosystems

Data Governance You Can Actually Enforce

Ownership, classification, access control, and audit trails, we implement data governance frameworks that satisfy regulators and give your teams confidence in the data they’re using.

Clear Data Ownership

Define who owns, manages, and is accountable for every critical dataset. This ensures responsibility is clear and decisions about data are consistent.

Data Classification Frameworks

Organise data based on sensitivity, usage, and regulatory requirements. This helps teams handle information appropriately across systems and workflows.

Role-Based Access Control

Give the right people access to the right data at the right time. Permissions are structured to protect sensitive information while enabling productivity.

Comprehensive Audit Trails

Track every data interaction across your systems. Maintain transparency, accountability, and a complete history for compliance reviews.

Regulatory Compliance Alignment

Design governance practices that align with relevant regulations and industry standards. Ensure your organisation is always prepared for audits and regulatory checks.

Data Quality & Integrity Monitoring

Give the right people access to the right data at the right time. Permissions are structured to protect sensitive information while enabling productivity.

Clean Data. Fast Pipelines. Decisions You Can Rely On

Let’s build a data foundation your whole organisation can trust.

Frequently Asked Questions

This is the most common situation we walk into, and it’s completely solvable. We start with a data landscape audit: mapping all your data sources, understanding what’s in each, how it’s structured, and how frequently it changes. From there, we design a centralised architecture, typically a modern data lakehouse or warehouse, that brings everything together in one governed, queryable place. You don’t need to replace any of your source systems; we connect to them as they are and build the unification layer on top.

A data warehouse is structured and optimised for fast SQL queries, great for reporting and BI. A data lake stores raw data of any format cheaply at massive scale, but querying it is harder. A data lakehouse (think Databricks or Delta Lake) combines the best of both, flexible raw storage with warehouse-grade query performance, and ML-ready data in the same platform. For most modern businesses, a lakehouse is the right choice. For simpler BI-focused use cases, a cloud warehouse like Snowflake or BigQuery may be all you need. We’ll recommend honestly based on your actual workloads.

Silent failures are the nightmare of every data team, dashboards showing wrong numbers with no warning. We address this with a comprehensive DataOps approach: automated data quality tests at every pipeline stage, schema validation checks, freshness alerts, and lineage tracking so you can trace any data point back to its source. Every pipeline we build comes with an observability layer, you’ll know about a data issue before your stakeholders do, and often before it even reaches production.